Symphony of Servos

Many non-literate users feel excluded from traditional information environments; by contrast, this installation creates a welcoming space where they can begin to see themselves as learners.

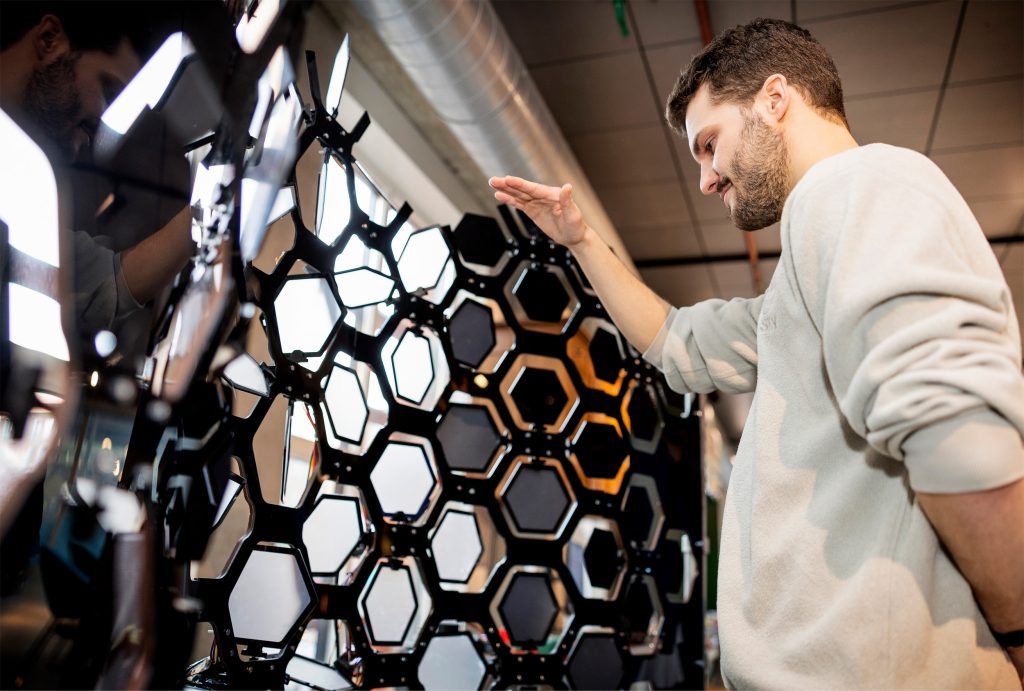

When you stand in front of it, a camera detects the position of your hand, and the panels respond. They move as you move – but more importantly, each panel is linked to a musical element. By interacting with the wall, you’re not just triggering movement; you’re actively shaping a music track. You might bring out the piano, soften the drums, or highlight a melody simply by guiding your hand across the surface.

By creating sound through simple movement, users gain an immediate, empowering sense of achievement, encouraging confident participation without fear of failure and supporting first steps toward literacy.

Students: Nicholas Jester, Tajin Popo, Silve Pott & Leon Stil

Everything for the base of the installation was laser cut to make sure we had precision and could at full scale. To prevent the brittle PMMA from breaking, we added a 6 mm wooden backing, which reinforced the structure without compromising the visual design.

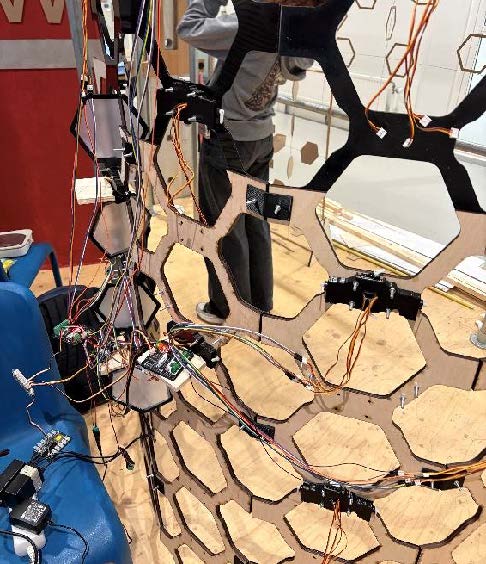

Because our design required a large number of servos, we needed a servo cover that could securely hold all components using a snap-fit mechanism while remaining lightweight.

We had two types of film: a white translucent film and a black film. By layering and combining these materials, we were able to create a third value that created the gradient across the panels. We cut out all of the individual films for the panels by hand.

Each individual servo needed three wires, which made wiring and cable management a time consuming part of the prototyping process.

In total, the system consisted of 36 SG90 servos connected to three motor driver boards. Using three motor boards required three separate I2C connections.

To really convey the goal of our installation we needed a lot of coding. The first step was implementing hand tracking to detect when a user’s hand was positioned in front of the wall, for this, we used Google’s MediaPipe library.

We also needed depth information to determine the distance between the hand and the wall. To achieve this, two cameras were mounted parallel, effectively creating a basic 3D vision system.